Mass Hunting For Misconfigured S3 Buckets

In this publication, I would like to cover how it is possible to efficiently check millions of misconfigured S3 buckets in very short amount of time. This approach I will share could be fully automated, so you could use it as a guide for updating your own unique Bug Bounty automation framework.

If you are not very familiar with Axiom tool, I will highly suggest you to follow previous parts, since it has more in depth description about main features.

What are S3 Buckets?

S3 bucket is the Amazon component of AWS cloud, which main purpose is for storing objects. Object is basically the unit of this type of storage, it is referred this way instead of file. Amazon S3 buckets are similar to file folders and can be used to store, retrieve, back up and access objects. This is very important to understand, since if it’s not properly configured it could have very severe consequences of leaking sensitive information, malicious users could create malicious files inside buckets, important files could be deleted and etc.

AWS S3 CLI Cheat sheet

This testing process could be done on the mass scale for thousands of Bug Bounty programs at once by using a custom wordlists, permutation techniques and of course Axiom. But before even deep diving into hunting, let’s explore some basic AWS S3 commands which you will need when validating for false positives aka checking bucket permissions manually.

- Listing objects in S3 bucket:

aws s3 ls s3://bucket-name

- Uploading an Object:

aws s3 cp /path/to/local/file s3://bucket-name/path/to/s3/key

- Downloading an Object:

aws s3 cp s3://bucket-name/path/to/s3/key /path/to/local/file

- Removing an Object:

aws s3 rm s3://bucket-name/path/to/s3/key

Preparing wordlists

To demonstrate that it is possible to hack on a large amount of programs at once, I will use project discovery’s public bug bounty program list from GitHub. Also, I will filter out only programs which offer bounty, select their names and root domains with jq:

curl -s https://raw.githubusercontent.com/projectdiscovery/public-bugbounty-programs/main/chaos-bugbounty-list.json | jq ".programs[] | select(.bounty==true) | .name,.domains[]" -r > base_wordlist.txt

Also, it is required to format base wordlist to remove special and uppercase characters:

cat base_wordlist.txt | tr '[:upper:]' '[:lower:]' | sed "s/[^[:alnum:].-]//g" | sort -u > tmpmv tmp base_wordlist.txt

Now we have a pretty good base wordlist to start from. We need to do some permutations, in order to form a good list for future brute force. I like to use nahamsec’s lazys3 script just for these type of permutations. For brute forcing it’s pretty outdated, but for just for wordlists it will do a job.

Also, you are going to need to add some prefixes as well to the common_bucket_prefixes.txt file, since those most likely were exhausted already by other hunters already!

I have added some prefix words by having inspiration of https://buckets.grayhatwarfare.com/top_keywords/. It is a very good website for hunting misconfigured S3 buckets as well, since it does store all scanned files and directories! The only drawback I have found from it — the list was not updated very often, maybe only once a month. So for this tutorial, I’ve just added the common words used in object storage buckets.

Next, it’s time to form a final wordlist. I have modified the end of the ruby script to not scan and just output the permutations to the screen:

Finally, I have formed the final wordlist from base:

for i in `cat ../base_wordlist.txt`; do ruby lazys3.rb $i | tee -a ../wordlist.txt; done

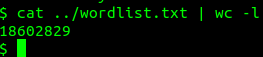

After running this permutation script, depending on common_bucket_prefixes.txt size, we will have a large wordlist of potential S3 bucket candidates:

As you can see, my wordlist contains over 18 million different combinations!

Running axiom scan for S3’s

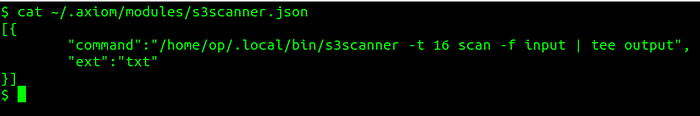

We will be running s3scanner axiom module to check those potential bucket names and their permissions. It could take multiple days to check all words, depending on how much Axiom instances we will have. Luckily, we can modify the module a little, to run even faster:

I have added 16 threads, since the default is 4. The larger amount could cause some problems, like rate limiting of false positives.

I would also like to split the final wordlist in chunks of 1 million combinations each file, in case something could go wrong during the scan:

split -l 1000000 wordlist.txt

For this demonstration, I will show how fast the Axiom could scan the first 1 million buckets and their permissions using 10 instances:

axiom-fleet s3scanner -i 10axiom-scan xaa -m s3scanner | grep -a bucket_exists

As you can see, I have found bucket with Read permission enabled within a couple of minutes of scan. It is not yet means that it is vulnerable, you need to check it manually and try to validate if it really belongs to the target organization.

To filter out the results even more, you could also append the following to the last command:

| grep -aE “Read|Write|Full”

If you have followed this guide, you are ready to build pretty powerful automation with the help of distributed machines. This guide only showed potential on how only AWS misconfigured S3 buckets could be scanned for faulty permission models. Remember that there could be similar issues on Azure blob storage, DigitalOcean spaces, Google cloud, and more. It’s up to you to develop your own twist, and use your own tools to find those misconfigurations. So why are you waiting for, let’s get your feet dirty and do some hacking!

I am active on Twitter, check out some content I post there daily! If you are interested in video content, check my YouTube. Also, if you want to reach me personally, you can visit my Discord server. Cheers!